In 2018 my girlfriend and I decided to invest in new roller blinds for our living room. We needed to cover six windows right next to each other. Luxaflex offered some appealing designs like Duette and Twist. At the time PowerView motorization wasn’t available for Duette, so we settled with Twist. That sums up the introduction and cosmetic part of this review, so let’s move on to the technical part as this is a technical blog. 🙂

Electrical installation

First challenge was the electrical installation, since the blinds are powered by 18 V DC. Had it been 230 V AC (like Somfy), I could have powered them from a nearby ceiling mount, but this requirement had to be planned carefully. I didn’t want to settle with batteries, as I would have to recharge six blinds something like three times per year. I’m pretty sure this would annoy me more than I would appreciate the motorization. Only option for me was to bore holes in the ceiling and supply power from here.

But that triggered next challenge: Where to place the power supplies? It would have to be somewhere reachable and somewhere near 230 V. As preparation I created a new power socket at the attic. However, ultimately I decided to pull 15 meter 2.5 mm² cable over the ceiling to each pair of motors (so motors to the right, left, right, left, right and left) with individual conductors for each motor. All cables end where I have my electrical installation in another part of the house. Official requirement per motor is 1 A/18 V. This is really to be on the safe side and I decided to go a bit lower than that, so settled with a single Mean Well DR-100-15 for supplying all motors instead of two (after performing some tests and measuring the load) – that’s ~5.4 A.

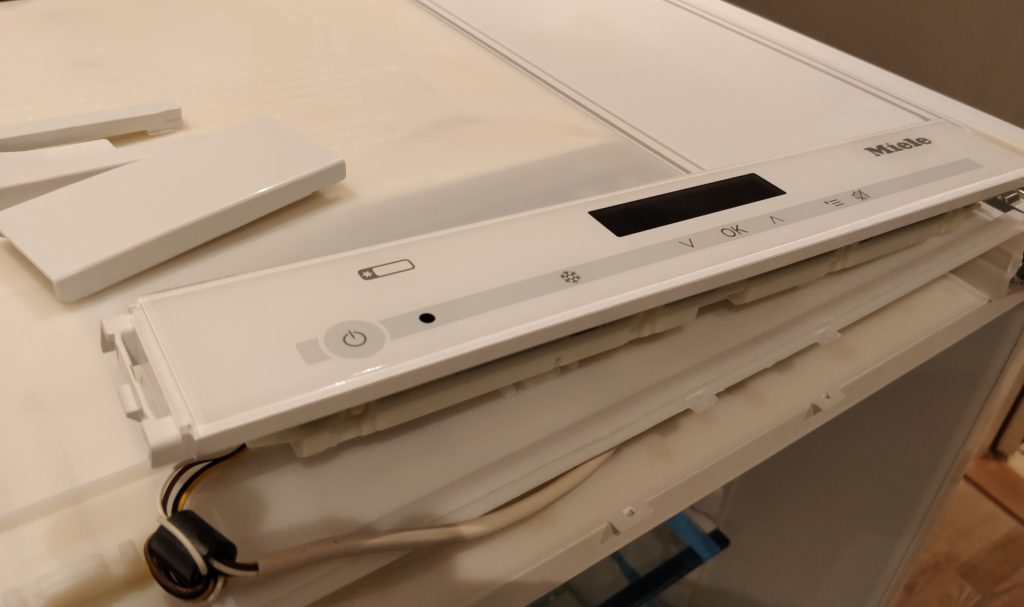

Installation looks like this:

This year we extended our house, and I of course made sure that we would have 2.5 mm² cables with three conductors inside the wall – for each window. This way I’m in full control, and in the future I will be able to easily replace by 230 V motors if needed. The power supply was upgraded to a Mean Well HDR-150-15 which is now supplying all blinds, including three new roller blinds in the extended part of the house (~8 A in total):

Quick summary: 18 V is troublesome – separate cables needed from all locations to a power supply. 230 V would have been the better choice for easy installation and less cables.

DC connectors

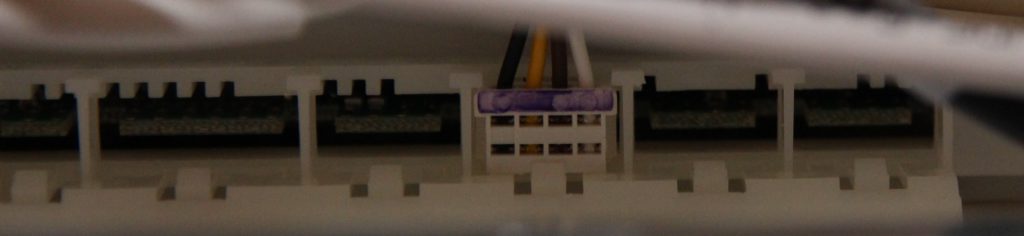

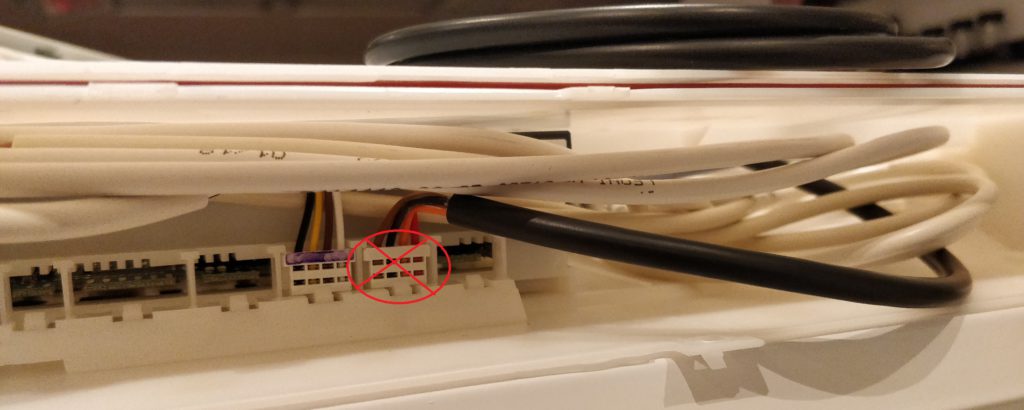

Now next problem. Standard DC 5.5 x 2.1 mm connectors are used. But the motor doesn’t have an internal connector. Instead it has a small piece of cable (~30 cm) with a male connector on it. So keep that in mind, because that connector needs to be hidden somewhere, and it may have to be able to go through bored holes in the ceiling etc. instead of just the cable itself (which would require a smaller hole).

I had to buy as bunch of these female panel mount connectors to be able to fit them and the male connector inside the housing for built-in mounting in the wall:

RF quality

Since PowerView is wireless, we need to look into the RF implementation/performance. The implementation is proprietary, so no integration possible through ZigBee or Z-Wave. Only Hunter Douglas remotes and Hub can be used. The Pebble remote I bought with the six original blinds performs quite poorly. It litterally needs line-of-sight in order to get transmissions through. If I’m behind a corner in the living room (same room) and want to operate all six blinds at the same time, one or two of the last ones won’t get the signal. So performance is similar to IR.

Luckily the Hunter Douglas Hub (Gen 2) has better range. I initially placed it in my server room, not far from the living room, but still two rooms away. Often one random blind would not receive the command, so I had to move the Hub closer. I first moved it into the same room very close to the blinds. That improved the setup significantly, so most times all blinds would react as they should. However, I was told by support that there’s no feedback from the motors, they just receive 3-5 RF impulses and that’s it. So on rare occations, I can still experience one of the blinds not being in the correct position. This year I moved the Hub on the other side of a wall, and range is still acceptable, but not completely reliable. That’s not quite good enough in my opinion for a product in this price range.

Worst part is that communication is one-way. Not only does it hurt reliability, but also synchronization. If the Pebble remotes are used to operate the blinds directly, the Hub will not be informed, and as a result the state is out of sync. This makes it impossible to create home automation rules based on blind state, or at least it will not work in conjunction with the Pebble remotes.

Hardware summary

A small recap before we move on to the fun part – the software:

- Voltage: 18 V DC – annoying, but not a showstopper for me.

- DC connectors not integrated – annoying, but I was able to hide them with some effort.

- RF proprietary protocol – bad.

- RF range/reliability – bad, and no work-arounds for this.

Software support

Now the fun part and the reason why I went for Hunter Douglas PowerView back in 2018: I knew that it was supported by Logitech Harmony and also by openHAB. Hunter Douglas also made a nice app to configure and control the blinds through the Hub on local network.

App

The app works pretty decent. It’s not without issues, but overall it does its job. Initially it is used to configure the Hub, i.e. add the blinds, so they can be controlled. It can also be used to create automations like “open blinds 10 minutes before sunset on weekdays”. For most common scenarios, where conditions are not needed, this is sufficient. Automations live on the Hub, so there are no external dependencies. However, if you want blinds to open at sunset or 6:30, whichever comes last, you will have to use home automation like openHAB or interface directly with the API yourself.

openHAB

The openHAB binding works like a charm. It let’s you control/monitor each blind as well as trigger scenes. That’s really everything you need. Configuration is easy with discovery and easy adding of blinds as things.

Logitech Harmony

This integration worked out of the box. The Hunter Douglas Hub was simply added in the Harmony app and after that it was easy to add scenes to hardware buttons on my Harmony Elite remote control as well as include a scene in my Movie activity. This means that all blinds will close when starting the movie activity, and two buttons on the remote are dedicated to trigger open/close scenes.

Scenes can be configured to include any blinds in any individual positions. The downside of using scenes is that the motors are operating at slow speed when triggered from a scene (as opposed to direct commands). This is probably implemented this way because the motors are noisy, so when using automation, it wouldn’t be very discrete. However, I would have preferred this to be configurable, and also that the motors were less noisy.

Conclusion

Overall I’m pretty happy with my setup and I have come to enjoy the automation part more and more, especially as we now have nine motorized blinds in the house. Automations based on sunrise/sunset offsets are really nice and convenient. Most of the time we don’t manually control the blinds anymore, as the automation already did what was needed when it was needed.

RF (lack of) reliability is probably the worst part (apart from the installation) since there is no way to fix it. I wonder how it compares to Somfy.